That's unfortunate. Having Tumblr as your frontend for posting and curating feeds, with WordPress as the backend and integrated with decentralized networks like the Fediverse for distribution would've been amazing. It sounds like Tumblr is still strategic to Automattic and as more people move away from the centralized social platforms and towards their own corners of the internet, having these differente building blocks in place will be even more important.

I’ve long advocated selling off some federal land...Most of this “public land” is never used by the public. Selling some of it would actually make it more accessible and useful to real people.

How about no. If privatization means, John and Jane Doe can purchase some land for their homestead is one thing. We've tried that before by the way - Homestead Act. In reality, it means some private equity firm or hedge fund buying the land, building expensive and shoddy cookit-cutter condos, and selling you a subscription for a roof over your head. I say condos because it's hot and there's not a lot of water in Vegas, so keeping those brand new AI data centers cool might be a problem.

Also, if you haven't seen that area that Alex highlights in his post, it seems like a deserted landscape. And yes, the terrain can be rough, but actually, it's a beautiful landscape that is best left undisturbed.

https://jyopari.github.io/posts/seal

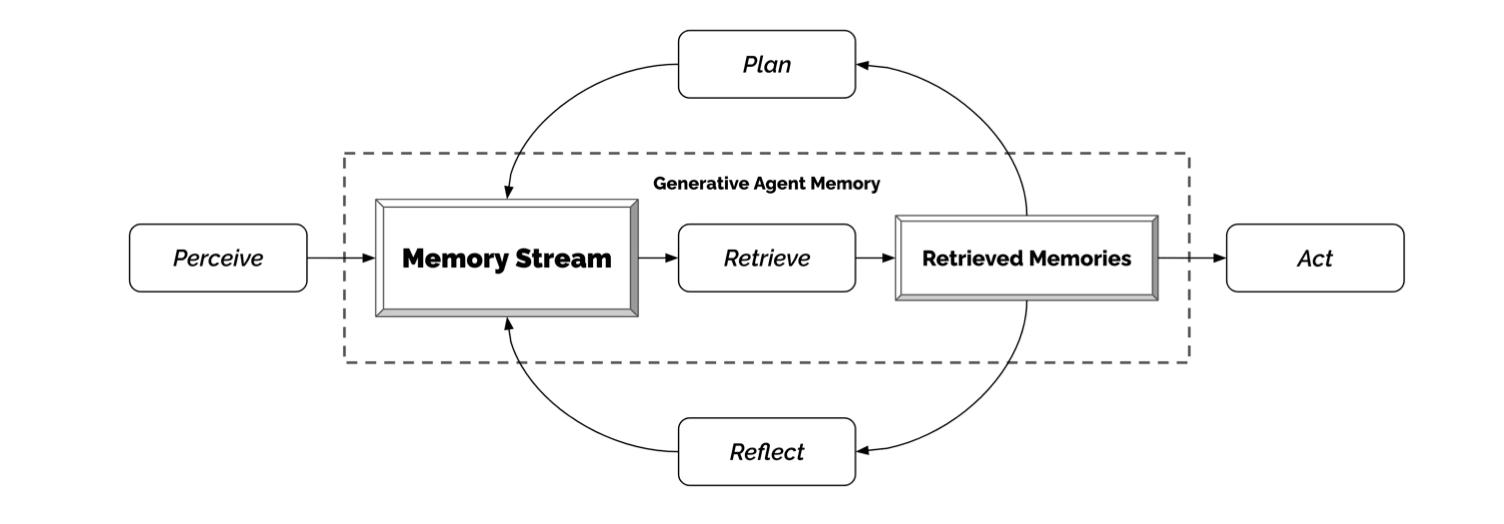

Large language models (LLMs) are powerful but static; they lack mechanisms to adapt their weights in response to new tasks, knowledge, or examples. We introduce Self-Adapting LLMs (SEAL) 🦭, a framework that enables LLMs to self-adapt by generating their own finetuning data and update directives. Given a new input, the model produces a self-edit — a generation that may restructure the information in different ways, specify optimization hyperparameters, or invoke tools for data augmentation and gradient-based updates. Through supervised finetuning (SFT), these self-edits result in persistent weight updates, enabling lasting adaptation. To train the model to produce effective self-edits, we use a reinforcement learning loop, using the downstream performance of the updated model as the reward signal. Unlike prior approaches that rely on separate adaptation modules or auxiliary networks, SEAL directly uses the model's generation to parameterize and control its own adaptation process. Experiments on knowledge incorporation and few-shot generalization show that SEAL is a promising step toward language models capable of self-directed adaptation in response to new data.

We demonstrate SEAL in two domains: (1) Knowledge Incorporation, where the model integrates new factual information by generating logical implications as synthetic data, and (2) Few-Shot Learning, where the model autonomously selects data augmentations and training hyperparameters to adapt to new abstract reasoning tasks.

https://www.anthropic.com/engineering/built-multi-agent-research-system

Great article.

TLDR: These are largely engineering problems.

The journey of this multi-agent system from prototype to production taught us critical lessons about system architecture, tool design, and prompt engineering. A multi-agent system consists of multiple agents (LLMs autonomously using tools in a loop) working together. Our Research feature involves an agent that plans a research process based on user queries, and then uses tools to create parallel agents that search for information simultaneously. Systems with multiple agents introduce new challenges in agent coordination, evaluation, and reliability.

Benefits of a multi-agent system

The essence of search is compression: distilling insights from a vast corpus. Subagents facilitate compression by operating in parallel with their own context windows, exploring different aspects of the question simultaneously before condensing the most important tokens for the lead research agent. Each subagent also provides separation of concerns—distinct tools, prompts, and exploration trajectories—which reduces path dependency and enables thorough, independent investigations.

Once intelligence reaches a threshold, multi-agent systems become a vital way to scale performance. For instance, although individual humans have become more intelligent in the last 100,000 years, human societies have become exponentially more capable in the information age because of our collective intelligence and ability to coordinate. Even generally-intelligent agents face limits when operating as individuals; groups of agents can accomplish far more.

Our internal evaluations show that multi-agent research systems excel especially for breadth-first queries that involve pursuing multiple independent directions simultaneously.

Multi-agent systems work mainly because they help spend enough tokens to solve the problem...Multi-agent architectures effectively scale token usage for tasks that exceed the limits of single agents.

We’ve found that multi-agent systems excel at valuable tasks that involve heavy parallelization, information that exceeds single context windows, and interfacing with numerous complex tools.

Architecture overview

Our Research system uses a multi-agent architecture with an orchestrator-worker pattern, where a lead agent coordinates the process while delegating to specialized subagents that operate in parallel.

Traditional approaches using Retrieval Augmented Generation (RAG) use static retrieval. That is, they fetch some set of chunks that are most similar to an input query and use these chunks to generate a response. In contrast, our architecture uses a multi-step search that dynamically finds relevant information, adapts to new findings, and analyzes results to formulate high-quality answers.

Prompt engineering and evaluations for research agents

Think like your agents. To iterate on prompts, you must understand their effects. To help us do this, we built simulations using our Console with the exact prompts and tools from our system, then watched agents work step-by-step.

Teach the orchestrator how to delegate. In our system, the lead agent decomposes queries into subtasks and describes them to subagents. Each subagent needs an objective, an output format, guidance on the tools and sources to use, and clear task boundaries. Without detailed task descriptions, agents duplicate work, leave gaps, or fail to find necessary information.

Scale effort to query complexity. Agents struggle to judge appropriate effort for different tasks, so we embedded scaling rules in the prompts...

Tool design and selection are critical. Agent-tool interfaces are as critical as human-computer interfaces. Using the right tool is efficient—often, it’s strictly necessary...Bad tool descriptions can send agents down completely wrong paths, so each tool needs a distinct purpose and a clear description.

Let agents improve themselves....When given a prompt and a failure mode, they are able to diagnose why the agent is failing and suggest improvements.

Start wide, then narrow down. Search strategy should mirror expert human research: explore the landscape before drilling into specifics.

Guide the thinking process....Our testing showed that extended thinking improved instruction-following, reasoning, and efficiency. Subagents also plan, then use interleaved thinking after tool results to evaluate quality, identify gaps, and refine their next query. This makes subagents more effective in adapting to any task.

Parallel tool calling transforms speed and performance....For speed, we introduced two kinds of parallelization: (1) the lead agent spins up 3-5 subagents in parallel rather than serially; (2) the subagents use 3+ tools in parallel.

Our prompting strategy focuses on instilling good heuristics rather than rigid rules. We studied how skilled humans approach research tasks and encoded these strategies in our prompts—strategies like decomposing difficult questions into smaller tasks, carefully evaluating the quality of sources, adjusting search approaches based on new information, and recognizing when to focus on depth (investigating one topic in detail) vs. breadth (exploring many topics in parallel).

Effective evaluation of agents

...evaluating multi-agent systems presents unique challenges...Because we don’t always know what the right steps are, we usually can't just check if agents followed the “correct” steps we prescribed in advance. Instead, we need flexible evaluation methods that judge whether agents achieved the right outcomes while also following a reasonable process.

Start evaluating immediately with small samples....it’s best to start with small-scale testing right away with a few examples, rather than delaying until you can build more thorough evals.

LLM-as-judge evaluation scales when done well....We used an LLM judge that evaluated each output against criteria in a rubric: factual accuracy (do claims match sources?), citation accuracy (do the cited sources match the claims?), completeness (are all requested aspects covered?), source quality (did it use primary sources over lower-quality secondary sources?), and tool efficiency (did it use the right tools a reasonable number of times?)...[we] found that a single LLM call with a single prompt outputting scores from 0.0-1.0 and a pass-fail grade was the most consistent and aligned with human judgements.

Human evaluation catches what automation misses....Adding source quality heuristics to our prompts helped resolve this issue. Even in a world of automated evaluations, manual testing remains essential.

the best prompts for these agents are not just strict instructions, but frameworks for collaboration that define the division of labor, problem-solving approaches, and effort budgets. Getting this right relies on careful prompting and tool design, solid heuristics, observability, and tight feedback loops.

Production reliability and engineering challenges

Agents are stateful and errors compound....Agents can run for long periods of time, maintaining state across many tool calls. This means we need to durably execute code and handle errors along the way. Without effective mitigations, minor system failures can be catastrophic for agents. When errors occur, we can't just restart from the beginning...We combine the adaptability of AI agents built on Claude with deterministic safeguards like retry logic and regular checkpoints.

Debugging benefits from new approaches...Adding full production tracing let us diagnose why agents failed and fix issues systematically. Beyond standard observability, we monitor agent decision patterns and interaction structures—all without monitoring the contents of individual conversations, to maintain user privacy.

Deployment needs careful coordination...we use rainbow deployments to avoid disrupting running agents, by gradually shifting traffic from old to new versions while keeping both running simultaneously.

Synchronous execution creates bottlenecks. Currently, our lead agents execute subagents synchronously, waiting for each set of subagents to complete before proceeding. This simplifies coordination, but creates bottlenecks in the information flow between agents. Asynchronous execution would enable additional parallelism: agents working concurrently and creating new subagents when needed. But this asynchronicity adds challenges in result coordination, state consistency, and error propagation across the subagents. As models can handle longer and more complex research tasks, we expect the performance gains will justify the complexity.

Conclusion

For all the reasons described in this post, the gap between prototype and production is often wider than anticipated.

Multi-agent research systems can operate reliably at scale with careful engineering, comprehensive testing, detail-oriented prompt and tool design, robust operational practices, and tight collaboration between research, product, and engineering teams who have a strong understanding of current agent capabilities.

https://swicg.github.io/activitypub-e2ee/mls

Messaging Layer Security (MLS) is an IETF standard for end-to-end encrypted (E2EE) messaging. It lets people on laptops and phones communicate with each other in a secure way that no one in between can see.

MLS is designed to use pluggable lower-level protocols. This specification defines an envelope format for distributing MLS messages through the network, and an Activity Streams 2.0 profile for the packets of application data stored inside the messages.

This specification is ready for review from both ActivityPub developers and security analysts. It’s time to start making proof-of-concept implementations and testing interoperability.

Source: https://socialwebfoundation.org/2025/06/13/mls-over-activitypub-draft/

https://simonrepp.com/faircamp/

This is such a cool project. Looks like it's super simple to get started with and it's packed with a ton of features so creators get to stay in their flow.

I also like how creators are showcased.

https://elevenlabs.io/blog/conversational-ai-2-0

Conversational AI 2.0 launches with advanced features and enterprise readiness.

- Natural turn-taking to understand the flow of conversation.

- Multilingual communication with integrated language detection

- Integrated RAG: knowledgeable agents, minimum latency, maximum privacy

- Multimodality

- Batch calls

A longstanding goal of AI research has been the creation of AI that can learn indefinitely. One tantalizing path toward that goal is an AI that improves itself by rewriting its own code, including any code responsible for learning. That idea, known as a Gödel Machine, proposed by Jürgen Schmidhuber decades ago, is a hypothetical self-improving AI. It optimally solves problems by recursively rewriting its own code when it can mathematically prove a better strategy, making it a key concept in meta-learning or “learning to learn.”

While the theoretical Gödel Machine promised provably beneficial self-modifications, its realization relied on an impractical assumption: that the AI could mathematically prove that a proposed change in its own code would yield a net improvement before adopting it. We, in collaboration with Jeff Clune’s lab at UBC, propose something more feasible: a system that harnesses the principles of open-ended algorithms like Darwinian evolution to search for improvements that empirically improve performance.We call the result the Darwin Gödel Machine (full technical report). DGMs leverage foundation models to propose code improvements, and use recent innovations in open-ended algorithms to search for a growing library of diverse, high-quality AI agents. Our experiments show that DGMs improve themselves the more compute they are provided. In line with the clear trend that AI systems that rely on learning ultimately outperform those designed by hand, there is a potential that DGMs could soon outperform hand-designed AI systems.

https://discourse.32bit.cafe/t/resources-list-for-the-personal-web/49

This list is to help guide and help those who are in every stage of their web-building journey on the personal web. This list is not meant to overwhelm you, but rather give you options and find tools, graphics, utilities, codes, and everything in between to help get you creating more on the independent web.

https://cheapskatesguide.org/articles/small-social-growth.html

Last month during Meta's antitrust trial, Mark Zuckerberg admitted that his company's focus has changed radically from where it began...[his] testimony implies that Facebook (Meta) may no longer be as much of an option if we actually want to talk to each other on line. But, neither are many of the other large social media sites.

...if companies allowed us to discover great new small blogs and small social media sites, many of us would decide to spend more time there. Then, companies would be less able to advertise to us on their own sites.

...we have been told by the mainstream media for well over a decade that small social media is dead. They say the same about small blogs, yet small blogs are actually more plentiful than ever, with over 600 million blogs on the Internet and about 7 million new blog articles published each day.

If you decide to create your own forum, do yourself a favor. The way to protect yourself and your users is by running your social media site at a domain that you own and on a server that you control, and don't agree to hand over any of your rights to anyone for any reason.

I believe large corporate-run social media sites are vulnerable in a way they have not been for twenty years. Their users have reached a state of extreme dissatisfaction with their "enshittified" platforms, and they are looking for something, anything, better.

But each of you who are reading these words can help end this situation by creating your own small social media site.

All you need to start your own small site is a domain name, a server that you can buy, rent, or set up on an old computer, perhaps social media software (see above), patience, a willingness to work, and ideas for ways of notifying potential users of the existence of your site.

Run your new site as you and your users see fit, and make it far superior to Facebook, Twitter (X), Reddit, or any of the other major sites whose owners think you have no other options.

https://audmcname.com/comics/rss-is-not-dead-yet/

Illustrating the history of RSS. Created in collaboration with A. Service, and debuted at VanCAF 2023

https://support.mozilla.org/en-US/kb/future-of-pocket

I haven't used Pocket in a long time but sad to hear it's shutting down. It's great they're offering the option of letting you export your data and platforms like Micro.blog are making it easy to host that content on your own site (assuming you're using Micro.blog).

For bookmarking solutions, I've been using my website as well as messages-to-self on Element. What's on my website is the content that I really want to make sure I archive, whereas the messages to self I treat more as a read-it-later solution. Eventually, some of those make it to my website. I'm working on my mobile publishing flow to simplify my bookmarking process but overall, I'm happy with my current system.

This is also another great reminder why owning your content is important.

https://agent-network-protocol.com/

Comparison of MCP and ANP: What Kind of Communication Protocol Do Agents Need?

MCP and ANP differ significantly in protocol architecture, identity authentication, and information organization.

- MCP is a typical Client-Server (CS) architecture, while ANP is a typical Peer-to-Peer (P2P) architecture.

- MCP's identity authentication is based on the OAuth standard, facilitating client access to current internet resources. ANP's identity authentication is based on the W3C DID standard, focusing on cross-platform interoperability among agents, enabling seamless connectivity.

- MCP organizes information using JSON-RPC technology, essentially API calls. ANP uses semantic web Linked-Data technology to build a data network that is easily accessible and understandable by AI.

ANP is agent-centric, where each agent has equal status, forming a decentralized agent collaboration network.

Never change Chicago.

What felt like the first week of good weather since forever ends with a dust storm.

💯💯💯. Artifacts are great. In ChatGPT when I find myself trying to format outputs as markdown, the UI gets confused and some of the content is rendered in markdown while the rest gets rendered as part of the UI which makes it unusable and hard to copy. The best way I've found to get around it is to ask it to format in org-mode or asciidoc. Claude Artifacts make this a non-issue. I also love that in addition to copying and downloading the artifact, you can also publish it as a URL.

https://hypha.coop/dripline/announcing-dp-social-inbox/

I hadn't heard of Distributed Press and this is an older post (from 2023), but the idea is interesting.

Hypha and Sutty are thrilled to announce the release of the Social Inbox, a new feature of Distributed.Press that integrates a website’s comment section with federated social media platforms like Mastodon. With the Social Inbox enabled, websites obtain their own account on the Fediverse, allowing it to automatically send out new posts to followers at the time of publication. When other users reply to posts, you can approve them to be published to the site as comments. The Social Inbox allows readers to directly engage with your posts where they already are, and gives publishers the ability to incorporate public dialogue into their websites.

https://blog.discourse.org/2025/04/discourse-and-the-fediverse/

Two years ago, we started working on a plugin that brings Discourse and the Fediverse closer together. Discourse communities are online spaces that facilitate open collaboration and communication. The Fediverse offers ways to expand the reach of Discourse communities and help them build bridges with people active in other spaces, all while keeping the conversation civil, meaningful and focused. This post will describe how the ActivityPub plugin works and how you can enable your Discourse community to connect with other communities or Fediverse users.

https://osteophage.neocities.org/writing/in-praise-of-links

Hyperlinks deserve more recognition in light of all the ways their value has been sidelined and denied.

To that end, I present a link compilation in praise of links. It includes things I agree with entirely and things I don't, spanning from the 2020s to the early 2000s, to supply a tapestry of perspectives, context, and examples on the value and importance of links. Links may have their downsides, challenges, and vulnerabilities, but my hope is that this compilation will (re)invigorate your appreciation for linking as a technology and a social practice, all the better to understand what's at stake when links are discarded and devalued.

https://thehistoryoftheweb.com/the-evolution-of-blogging/

...around 1997, when Jorn Barger launched his website, titling it “The Robot Wisdom Weblog.” Barger’s plan was to curate his favorite links from around the web, and add bits of commentary to each one. In other words, he meant to keep a log of his web experience. Hence weblog.

In their earliest days, webloggers stood as gatekeepers to the web’s ever-growing well of content. Each day, these URL pioneers would post a few new links and sprinkle in their own commentary. Blogs acted as a signpost for web users, and following a few key blogs was enough to keep track of just about everything new on the web. Many began to look at the blogging community as a brand new type of media, one that often stood far closer to an impartial truth than traditional mediums would allow for.

1999, Peter Merholz threw up his own weblog, and in the sidebar added the tagline: "I’ve decided to pronounce the word “weblog” as wee’- blog. Or “blog” for short."

In the beginning, most bloggers hand coded their sites, adding a new HTML page to their server each time they had a new entry, or just updating the homepage to include the newest links. But soon enough, tools showed up to help bloggers with the process.

...on the fringes, a new type of blog was emerging. The personal blog. These sites ditched the curated links and focused exclusively on commentary. Bloggers used their site to chronicle their personal journey, from the almost boring and banal to the weird and wonderful. This new type of blog was less an alternative media source and more akin to an online journal or diary. And these writers saw themselves not as gatekeepers to the web, but as sharers of their own identity.

New tools soon caught up with this shifting perspective. The first of the bunch to do so was LiveJournal...

Blogger came next. And it would prove to be the most popular of the early blogging tools. The platform first launched in August of 1999, but picked up steam the following year when it introduced the concept of a permalink.

These platforms made blogging open, in every sense of the word. Visitors were greeted with a single, open textarea on a free platform that was open to all to participate.

Blogger became the platform choice for a lot of writers out there. It certainly was for Mena Trott

Trott had the feeling that platforms like Blogger did not go nearly far enough.

Trott wanted her own site to stand out from the crowd. To be quintessentially hers. Blogger wouldn’t let her do that. So together with her husband, a Perl programmer, she built Movable Type...And with that move, Trott ushered in a third generation of blogging.

Movable Type allowed users to set up their blog on their own server, and have complete control over its look and feel.

Blog (the noun and verb) became a word familiar not just to web geeks, but to everyone. Lots of imitators and innovators followed in the wake of Moveable Type. Open source platforms like WordPress rose to meet the rising demand. But for a long time, Moveable Type was the standard. It fed its community with a constant stream of new features that promoted openness and freedom.

Blogging, as a community and a practice, continues to be refined to this day thanks to the dedication of web users everywhere. But its sharpest point was made the day Mena Trott decided that a blog could be more than just some text on a page. It could be one of a kind.

Your gateway to decentralized social networking protocols, resources, and community

https://huggingface.co/blog/autoround

As large language models (LLMs) and vision-language models (VLMs) continue to grow in size and complexity, deploying them efficiently becomes increasingly challenging. Quantization offers a solution by reducing model size and inference latency. Intel's AutoRound emerges as a cutting-edge quantization tool that balances accuracy, efficiency, and compatibility.

AutoRound is a weight-only post-training quantization (PTQ) method developed by Intel. It uses signed gradient descent to jointly optimize weight rounding and clipping ranges, enabling accurate low-bit quantization (e.g., INT2 - INT8) with minimal accuracy loss in most scenarios. For example, at INT2, it outperforms popular baselines by up to 2.1x higher in relative accuracy.

https://huggingface.co/nvidia/parakeet-tdt-0.6b-v2

parakeet-tdt-0.6b-v2 is a 600-million-parameter automatic speech recognition (ASR) model designed for high-quality English transcription, featuring support for punctuation, capitalization, and accurate timestamp prediction.

This XL variant of the FastConformer [1] architecture integrates the TDT [2] decoder and is trained with full attention, enabling efficient transcription of audio segments up to 24 minutes in a single pass.

https://about.flipboard.com/fediverse/fediverse-house-2025-roundup/

No Walls, Just Vibes at SXSW’s Fediverse House

Fediverse House was SXSW’s first physical gathering dedicated entirely to the constellation of interoperable social platforms powered by protocols like ActivityPub and AT Protocol. (We know “Fediverse” isn’t quite accurate to describe the whole ecosystem; in this case, it was just a convenient, fun way to name the space.)

It didn’t matter which platform you preferred or which protocol you were building on; everyone was focused on the singular goal of building a better internet.

...the real power move for creators is ownership and control of their work and livelihoods.

...identity portability is a radical shift, and...decentralization could lead to more humane social spaces.

Locate 3D transforms how AI understands physical space, delivering state-of-the-art object localization in complex 3D environments.

Operating directly on standard sensor data, Locate 3D brings spatial intelligence to robotics and augmented reality applications in real-world settings.

We present Locate 3D, a model for localizing objects in 3D scenes from referring expressions like “the small coffee table between the sofa and the lamp.” Locate 3D sets a new state-of-the-art on standard referential grounding benchmarks and showcases robust generalization capabilities. Notably, Locate 3D operates directly on sensor observation streams (posed RGB-D frames), enabling real-world deployment on robots and AR devices. Key to our approach is 3D-JEPA, a novel self-supervised learning (SSL) algorithm applicable to sensor point clouds. It takes as input a 3D pointcloud featurized using 2D foundation models (CLIP, DINO). Subsequently, masked prediction in latent space is employed as a pretext task to aid the self-supervised learning of contextualized pointcloud features. Once trained, the 3D-JEPA encoder is finetuned alongside a language-conditioned decoder to jointly predict 3D masks and bounding boxes. Additionally, we introduce Locate 3D Dataset, a new dataset for 3D referential grounding, spanning multiple capture setups with over 130K annotations. This enables a systematic study of generalization capabilities as well as a stronger model.

https://alibaba-nlp.github.io/ZeroSearch/

Effective information searching is essential for enhancing the reasoning and generation capabilities of large language models (LLMs). Recent research has explored using reinforcement learning (RL) to improve LLMs' search capabilities by interacting with live search engines in real-world environments. While these approaches show promising results, they face two major challenges: (1) Uncontrolled Document Quality: The quality of documents returned by search engines is often unpredictable, introducing noise and instability into the training process. (2) Prohibitively High API Costs: RL training requires frequent rollouts, potentially involving hundreds of thousands of search requests, which incur substantial API expenses and severely constrain scalability. To address these challenges, we introduce ZeroSearch, a reinforcement learning framework that enhances the search capabilities of LLMs without interacting with real search engines. Our approach begins with lightweight supervised fine-tuning to transform the LLM into a retrieval module capable of generating both relevant and noisy documents in response to a query. During RL training, we employ a curriculum-based rollout strategy that incrementally degrades the quality of generated documents, progressively eliciting the model’s reasoning ability by exposing it to increasingly challenging retrieval scenarios. Extensive experiments demonstrate that ZeroSearch effectively incentivizes the search capabilities of LLMs using a 3B LLM as the retrieval module. Remarkably, a 7B retrieval module achieves comparable performance to the real search engine, while a 14B retrieval module even surpasses it. Furthermore, it generalizes well across both base and instruction-tuned models of varying sizes and is compatible with a wide range of RL algorithms.

https://arxiv.org/abs/2504.16736

It'll be interesting to see once many of the exiting protocols begin to converge towards a standard. There's a ton of existing well-adopted protocol standards out there that when stitched together could create a compelling and native experience. I think eventually we'll get to a place where agents are native parts of the OSI stack and the world wide web.

The rapid development of large language models (LLMs) has led to the widespread deployment of LLM agents across diverse industries, including customer service, content generation, data analysis, and even healthcare. However, as more LLM agents are deployed, a major issue has emerged: there is no standard way for these agents to communicate with external tools or data sources. This lack of standardized protocols makes it difficult for agents to work together or scale effectively, and it limits their ability to tackle complex, real-world tasks. A unified communication protocol for LLM agents could change this. It would allow agents and tools to interact more smoothly, encourage collaboration, and triggering the formation of collective intelligence. In this paper, we provide the first comprehensive analysis of existing agent protocols, proposing a systematic two-dimensional classification that differentiates context-oriented versus inter-agent protocols and general-purpose versus domain-specific protocols. Additionally, we conduct a comparative performance analysis of these protocols across key dimensions such as security, scalability, and latency. Finally, we explore the future landscape of agent protocols by identifying critical research directions and characteristics necessary for next-generation protocols. These characteristics include adaptability, privacy preservation, and group-based interaction, as well as trends toward layered architectures and collective intelligence infrastructures. We expect this work to serve as a practical reference for both researchers and engineers seeking to design, evaluate, or integrate robust communication infrastructures for intelligent agents.

https://hamatti.org/posts/resisting-the-urge-to-rewrite-the-website/

I have half-jokingly been entertaining an idea of building a new blog: for every blog post published, you get to add one new feature. The first blog post needs to be written in a plain txt file and deployed as-is to a server. With the second post, you can choose to add something you want: maybe a bit of HTML navigation to be able to have links between the blog posts. The third post could come with Markdown support so your posts are not plain text anymore but rendered into HTML. And so on.

The idea would is that you get to “earn” feature development by writing blog posts. If you want a fancy site with bells and whistles and good tooling, you have to write and publish a lot. That way, you cannot get lost into the rabbit hole of new shiny feature development at the expense of writing.

I like this suggestion. My rewrite has been ongoing but slow. I tried vibe-coding my way to success but didn't have much luck there, so it's taking more time to do it manually. If I was doing it from scratch it wouldn't be a challenge. The challenge is making all the posts and current structure fit into what I want to website to eventually look like.

I use different tools for writing and coding. I can write my blog posts on my iPad when I’m in the library or local pub and at that time, there’s no way for me to tinker with the tools or the website itself.

Another great suggestion. A lot of my desire to rewrite stems from frictions in my current workflow as well as being able to support new and different post types. Back when I had my Duo, I didn't mind the mobile VS Code authoring experience, but now that I'm back to a single screen device, not being able to publish from my phone creates a lot of friction.

https://arxiv.org/abs/2505.00562

Learning to solve complex tasks with signal temporal logic (STL) specifications is crucial to many real-world applications. However, most previous works only consider fixed or parametrized STL specifications due to the lack of a diverse STL dataset and encoders to effectively extract temporal logic information for downstream tasks. In this paper, we propose TeLoGraF, Temporal Logic Graph-encoded Flow, which utilizes Graph Neural Networks (GNN) encoder and flow-matching to learn solutions for general STL specifications. We identify four commonly used STL templates and collect a total of 200K specifications with paired demonstrations. We conduct extensive experiments in five simulation environments ranging from simple dynamical models in the 2D space to high-dimensional 7DoF Franka Panda robot arm and Ant quadruped navigation. Results show that our method outperforms other baselines in the STL satisfaction rate. Compared to classical STL planning algorithms, our approach is 10-100X faster in inference and can work on any system dynamics. Besides, we show our graph-encoding method's capability to solve complex STLs and robustness to out-distribution STL specifications. Code is available at this https URL

https://shellsharks.com/just-put-it-on-your-blog

It’s great to have a place to share your thoughts. A place you can go back to when you want to remember something you had written or thought about before. A place you can refer people to when they have questions you’ve answered in the past. A place to be you. So, get a blog, and put all the things there.

It is great to carve out your own space in the interwebs. If you run out of ideas of what to do with your website, here's James' blog with 100+ ideas.

https://arxiv.org/abs/2505.00023

In a real-world corpus, knowledge frequently recurs across documents but often contains inconsistencies due to ambiguous naming, outdated information, or errors, leading to complex interrelationships between contexts. Previous research has shown that language models struggle with these complexities, typically focusing on single factors in isolation. We classify these relationships into four types: distracting, ambiguous, counterfactual, and duplicated. Our analysis reveals that no single approach effectively addresses all these interrelationships simultaneously. Therefore, we introduce Context Organizer (CORG), a framework that organizes multiple contexts into independently processed groups. This design allows the model to efficiently find all relevant answers while ensuring disambiguation. CORG consists of three key components: a graph constructor, a reranker, and an aggregator. Our results demonstrate that CORG balances performance and efficiency effectively, outperforming existing grouping methods and achieving comparable results to more computationally intensive, single-context approaches.

https://arxiv.org/abs/2505.00234

Many methods for improving Large Language Model (LLM) agents for sequential decision-making tasks depend on task-specific knowledge engineering--such as prompt tuning, curated in-context examples, or customized observation and action spaces. Using these approaches, agent performance improves with the quality or amount of knowledge engineering invested. Instead, we investigate how LLM agents can automatically improve their performance by learning in-context from their own successful experiences on similar tasks. Rather than relying on task-specific knowledge engineering, we focus on constructing and refining a database of self-generated examples. We demonstrate that even a naive accumulation of successful trajectories across training tasks boosts test performance on three benchmarks: ALFWorld (73% to 89%), Wordcraft (55% to 64%), and InterCode-SQL (75% to 79%)--matching the performance the initial agent achieves if allowed two to three attempts per task. We then introduce two extensions: (1) database-level selection through population-based training to identify high-performing example collections, and (2) exemplar-level selection that retains individual trajectories based on their empirical utility as in-context examples. These extensions further enhance performance, achieving 91% on ALFWorld--matching more complex approaches that employ task-specific components and prompts. Our results demonstrate that automatic trajectory database construction offers a compelling alternative to labor-intensive knowledge engineering.

https://devblogs.microsoft.com/ise/running-rag-onnxruntime-genai/

Really great to see these case studies and comparisons.

A while back we published a blog post showcasing how experiences like the AI Dev Gallery make use of ONNX Runtime and the various AI building blocks in .NET to enable a diverse set of scenarios.

For more ONNX Runtime GenAI focused C# content, you can also reference the post Using Phi-3 & C# with ONNX for text and vision samples

https://github.com/AboutRSS/ALL-about-RSS

A list of RSS related stuff: tools, services, communities and tutorials, etc.

https://openai.com/index/image-generation-api/

Today, we’re bringing the natively multimodal model that powers this experience in ChatGPT to the API via

gpt-image-1, enabling developers and businesses to easily integrate high-quality, professional-grade image generation directly into their own tools and platforms.

The

gpt-image-1model is now available globally via the Images API, with support in the Responses API coming soon.

https://ai.meta.com/blog/llama-4-multimodal-intelligence/

We’re introducing Llama 4 Scout and Llama 4 Maverick, the first open-weight natively multimodal models with unprecedented context length support and our first built using a mixture-of-experts (MoE) architecture. We’re also previewing Llama 4 Behemoth, one of the smartest LLMs in the world and our most powerful yet to serve as a teacher for our new models.

Llama 4 Scout, a 17 billion active parameter model with 16 experts, is the best multimodal model in the world in its class and is more powerful than all previous generation Llama models, while fitting in a single NVIDIA H100 GPU. Additionally, Llama 4 Scout offers an industry-leading context window of 10M and delivers better results than Gemma 3

Llama 4 Maverick, a 17 billion active parameter model with 128 experts, is the best multimodal model in its class, beating GPT-4o and Gemini 2.0 Flash across a broad range of widely reported benchmarks

After tinkering with Mistral Small 3.1, I'm excited to try Llama 4 Scout once it lands in GitHub Models and Ollama.

I don't think that Mistral Small 3.1 uses MoE architecture which should help a ton considering the 10 million token context size.

https://www.fastcompany.com/91310237/the-tumblr-revival-is-real-and-gen-z-is-leading-the-charge

Thanks to Gen Z, the site has found new life. As of 2025, Gen Z makes up 50% of Tumblr’s active monthly users and accounts for 60% of new sign-ups

Don't call it a comeback.

User numbers spiked in January during the near-ban of TikTok and jumped again last year when Brazil temporarily banned X.

To keep up with the momentum, Tumblr introduced Reddit-style Communities in December, letting users connect over shared interests like photography and video games. In January, it debuted Tumblr TV—a TikTok-like feature that serves as both a GIF search engine and a short-form video platform.

But perhaps Tumblr’s greatest strength is that it isn’t TikTok or Facebook.

For its users...that’s part of the appeal.

Tumblr's been through a rough ride, but at the core there's a lot of nice things to like about it. In previous posts, I talked about some opportunities for Tumblr as a result of replatforming on top of WordPress and its expressed intent for ActivityPub integration. In doing so, not only would it enable an intuitive front-end for publishing different kinds of posts, but it would also provide opportunities for self-hosting and a more federated and decentralized social platform.

I'm excited to see where this goes.

Related post from Business Insider's Amanda Hoover on the topic.

Awesome post from my friend maho.dev

I’ve introduced customizable templates for note generation. This enhancement allows the full content of a blog post to be included directly in ActivityPub notes, moving beyond mere link sharing—a practice often associated with bots—and leveraging the full potential of the Fediverse.

This is cool. I'd like something better for my POSSE setup. Might give this a try.

https://blog.google/technology/developers/gemma-3/

Today, we're introducing Gemma 3, a collection of lightweight, state-of-the-art open models built from the same research and technology that powers our Gemini 2.0 models. These are our most advanced, portable and responsibly developed open models yet. They are designed to run fast, directly on devices — from phones and laptops to workstations — helping developers create AI applications, wherever people need them. Gemma 3 comes in a range of sizes (1B, 4B, 12B and 27B), allowing you to choose the best model for your specific hardware and performance needs.

Build with the world's best single-accelerator model: Gemma 3 delivers state-of-the-art performance for its size, outperforming Llama3-405B, DeepSeek-V3 and o3-mini in preliminary human preference evaluations on LMArena’s leaderboard. This helps you to create engaging user experiences that can fit on a single GPU or TPU host.

Create AI with advanced text and visual reasoning capabilities: Easily build applications that analyze images, text, and short videos, opening up new possibilities for interactive and intelligent applications.

Handle complex tasks with an expanded context window: Gemma 3 offers a 128k-token context window to let your applications process and understand vast amounts of information.

Create AI-driven workflows using function calling: Gemma 3 supports function calling and structured output to help you automate tasks and build agentic experiences.

https://cohere.com/blog/command-a

Today, we’re introducing Command A, a new state-of-the-art generative model optimized for demanding enterprises that require fast, secure, and high-quality AI. Command A delivers maximum performance with minimal hardware costs when compared to leading proprietary and open-weights models, such as GPT-4o and DeepSeek-V3. For private deployments, Command A excels on business-critical agentic and multilingual tasks, while being deployable on just two GPUs, compared to other models that typically require as many as 32.

Its 256k context length (2x most leading models) can handle much longer enterprise documents. Other key features include Cohere’s advanced retrieval-augmented generation (RAG) with verifiable citations, agentic tool use, enterprise-grade security, and strong multilingual performance.

The next generation of Cohere models will help power a range of AI applications for customers across industries like finance, healthcare, manufacturing, energy, and the public sector. In particular, they will seamlessly integrate with North, our secure AI agents platform to unlock the full potential of your company data and people with AI agents.

https://tools.simonwillison.net/colophon

The tools on tools.simonwillison.net were mostly built using AI-assisted programming.

This page lists the commit messages for each tool, many of which link to the LLM transcript used to produce the code.

The descriptions for each of the tools were generated using Claude 3.7 Sonnet.

This is such a cool use of a website. Using AI, Simon has generated a set of small utilities that are useful to him. If they're useful to others though, he's made them avaiable on his website.

https://mistral.ai/fr/news/mistral-small-3-1

Building on Mistral Small 3, this new model comes with improved text performance, multimodal understanding, and an expanded context window of up to 128k tokens. The model outperforms comparable models like Gemma 3 and GPT-4o Mini, while delivering inference speeds of 150 tokens per second.

Lightweight: Mistral Small 3.1 can run on a single RTX 4090 or a Mac with 32GB RAM. This makes it a great fit for on-device use cases.

https://ericmigi.com/blog/introducing-two-new-pebbleos-watches

Just pre-ordered my Pebble Core Time 2 Duo! Sadly it won't be here until December.

Go to https://store.repebble.com/ to get yours.

https://biilmann.blog/articles/introducing-ax/

Interesting post from Matt Biilman.

...the largest disruption from the current evolution of AI will come from bringing agency to computers.

Computers will perceive their environment and take actions autonomously in order to achieve goals.

Companies discovered that improving the DX of their products would empower and incentivize developers to extend their product with new capabilities and lead to huge competitive advantages. For developer tool companies, DX became a key competitive differentiator.

We need to start focusing on AX or “agent experience” — the holistic experience AI agents will have as the user of a product or platform.

Too many companies are focusing on adding shallow AI features all over their products or building yet another AI agent. The real breakthrough will be thinking about how your customers’ favorite agents can help them derive more value from your product. This requires thinking deeply about agents as a persona your team is building and developing for.

As AI agents start becoming useful and commonplace, we’re broadly going to see two approaches enabling agents to interact with the software we depend on:

A closed vertical approach, where companies tightly integrate their own agents into their own software. An open approach, where companies focus on making their software accessible to external agents.

Leaning into AX as a strategy, means embracing a vision of an open agent world. This vision aligns with the original ethos of the open web: a place where many diverse competing agents (built by different people or companies) can seamlessly interact with software on behalf of their users. Prioritizing AX makes it as simple as possible for any agent a user prefers, to deliver outcomes on their behalf.

Software designed for AI agents has the potential to deliver exponential value. As an industry we must collectively focus on building an open agent ecosystem and designing thoughtful AX to create a better, more open, and connected digital world.

https://www.inceptionlabs.ai/news

We are announcing the Mercury family of diffusion large language models (dLLMs), a new generation of LLMs that push the frontier of fast, high-quality text generation.

Mercury is up to 10x faster than frontier speed-optimized LLMs. Our models run at over 1000 tokens/sec on NVIDIA H100s, a speed previously possible only using custom chips.

https://www.inevitable.live/algorithm/first-post

This is just a place for me to share. I been wanting a lil blog for years. Somewhere to post random shit I fuck with where the audience is way smaller than it is on the social media platforms. Finally pulled the trigger, bare with us as we still developing this page and the layout.

This is cool!

I ran into this while reading the post Blogging for smaller audiences and deeper connections by Herbert Lui.

Looking at other posts, it looks like he or at least someone is actively posting.

https://nazhamid.com/journal/your-site-is-a-home/

While going through a backlog of personal blogs I haven't read in a few weeks, I'm noticing a trend in posts that express similar sentiments to those in Naz's post.

I'm happy to see more people choosing to have a place that gives them more autonomy and freedom to express themselves in whichever form makes the most sense for them.

Your site is a home.

Eventually, social networks were created...novelty and the promise of interconnectedness by gathering in a common town square to blast out whatever was going on in our lives eventually won out.

But you might have still had your website, your home to return to...the place you built.

Things started to change and instead of going home, you, and everybody else started to live in the town square.

There is a shape to your physical home. Arranged and organized in the ways that make sense for you, the reward is a space that works for you.

A platform or network doesn’t allow for much configuration. The town square isn’t owned by you.

People became absolutely reliant on the same gathering place...the convenience of seeing friends (sometimes) outweighed your other neighbors spouting garbage and hate. You came to rely on this place for everything.

You can still have a home.

I have very little at the town square, because it’s not a public one. It’s a walled-off town square, whose rules and borders change at the whims of those who created it.

Thanks for visiting my home. I’m glad you dropped by.

I’d love to see yours sometime.

In this guide, we will set up a multistreaming workflow using Azure, an Azure VM, MonaServer 2, and FFmpeg to broadcast to multiple platforms like LinkedIn Live and YouTube Live. We’ll use OBS Studio as the main streaming software. Let’s get started.

Love this detailed writeup from Maho.

When I originally experimented with something similar using my Owncast instance, I was running ffmpeg locally but I like his solution better.

https://unplatform.fromthesuperhighway.com/

Unplatform is an interactive guidebook, online library, and recommendations database intended to help you escape social media and join the indie web.

Love this guide.

https://arxiv.org/abs/2501.19393

Test-time scaling is a promising new approach to language modeling that uses extra test-time compute to improve performance. Recently, OpenAI's o1 model showed this capability but did not publicly share its methodology, leading to many replication efforts. We seek the simplest approach to achieve test-time scaling and strong reasoning performance. First, we curate a small dataset s1K of 1,000 questions paired with reasoning traces relying on three criteria we validate through ablations: difficulty, diversity, and quality. Second, we develop budget forcing to control test-time compute by forcefully terminating the model's thinking process or lengthening it by appending "Wait" multiple times to the model's generation when it tries to end. This can lead the model to double-check its answer, often fixing incorrect reasoning steps. After supervised finetuning the Qwen2.5-32B-Instruct language model on s1K and equipping it with budget forcing, our model s1-32B exceeds o1-preview on competition math questions by up to 27% (MATH and AIME24). Further, scaling s1-32B with budget forcing allows extrapolating beyond its performance without test-time intervention: from 50% to 57% on AIME24. Our model, data, and code are open-source at this https URL

https://www.nomic.ai/blog/posts/nomic-embed-text-v2

Today we're excited to announce Nomic Embed Text V2, our next-generation embedding model that brings the Mixture of Experts (MoE) architecture to text embeddings on a new expanded multilingual training dataset.

Personally, I found the part on MoE to be the most interesting about this release.

Rather than a dense model which uses all parameters on an input, the MoE architecture dynamically routes to different "experts" - sparse subsets of parameters at each layer - activating, ideally, only the parameters especially needed to process the input. This approach allows for more efficient use of compute when generating embeddings.

In our experiments, we found that alternating MoE layers with 8 experts and top-2 routing provides the optimal balance between performance and efficiency. This results in 475M total parameters in the model, but only 305M active during training and inference.

Research into embedding model architecture has significant practical implications for working with text embeddings in production:

- Lower latency for high-volume applications of embeddings like retrieval

- Reduced deployment costs through more efficient parameter usage

- More accessibility to embeddings in settings with constrained compute

https://www.anthropic.com/news/claude-3-7-sonnet

Today, we’re announcing Claude 3.7 Sonnet1, our most intelligent model to date and the first hybrid reasoning model on the market. Claude 3.7 Sonnet can produce near-instant responses or extended, step-by-step thinking that is made visible to the user. API users also have fine-grained control over how long the model can think for.

Claude 3.7 Sonnet shows particularly strong improvements in coding and front-end web development. Along with the model, we’re also introducing a command line tool for agentic coding, Claude Code. Claude Code is available as a limited research preview, and enables developers to delegate substantial engineering tasks to Claude directly from their terminal.

Claude 3.7 Sonnet and Claude Code mark an important step towards AI systems that can truly augment human capabilities. With their ability to reason deeply, work autonomously, and collaborate effectively, they bring us closer to a future where AI enriches and expands what humans can achieve.

https://huggingface.co/spaces/nanotron/ultrascale-playbook

Gold.

All the techniques we'll cover in this book tackle one or several of the following three key challenges, which we'll keep bumping into throughout the book:

1. **Memory Usage**: it's a hard limitation - if a training step doesn't fit in memory, training cannot proceed 2. **Compute Efficiency**: we want our hardware to spend most time computing, so we need to reduce time spent on data transfers or waiting for other GPUs to perform work. 3. **Communication overhead**: we want to minimize communication overhead as it keeps GPUs idle. To archieve this we will try to make best use of intra-node (fast) and inter-node (slower) bandwidths as well as overlap communication with compute as much as possible.

In many places we'll see that we can trade one of these (computation, communication, memory) for another (e.g. recomputation or Tensor Parallelism). Finding the right balance is key to scaling training.

https://www.youtube.com/watch?v=-7e6g11BJc0

I didn't watch the Super Bowl this year so I didn't get a chance to watch the ads until a few days later.

From the ones I saw, I think Google's was my favorite.

Apart from the touching story, what stood out to me the most was its focus on humanity, with technology playing a supporting role rather than being the main character.

https://www.youtube.com/watch?v=VOEeNffChSg

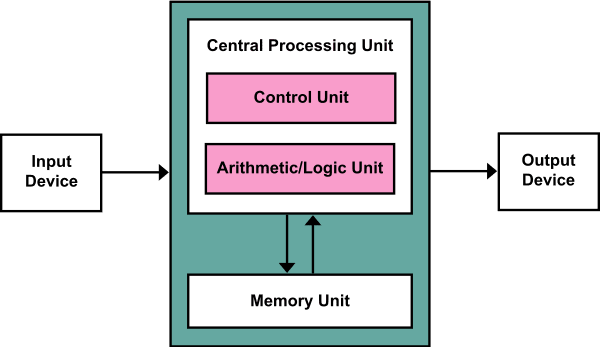

Great session on Tensors in .NET by Tanner

Tensors are the foundational data structure powering man AI workloads today.

Introduced in .NET 9, Tensors builds on top of earlier work like TensorPrimitives, hardware intrinsics, and generic math.

You can check out the recording below to learn more.

https://devblogs.microsoft.com/dotnet/announcing-generative-ai-for-beginners-dotnet/

If you're a .NET developer looking to get started with Generative AI, there's a new course for beginners that just launched.

Through a series of self-paced lessons, you'll learn the foundations to help you start using AI to augment the capabilities of your .NET applications.

Check out the course and give us feedback!

https://apps.microsoft.com/detail/9n9pn1mm3bd5

So excited to see the AI Dev Gallery is now in the Microsoft Store.

Through various interactive samples, developers get to see how they might use AI for different tasks like text classification, object detection, and many others.

Best of all, the models are all running locally and you get to see and export the C# code powering the samples to Visual Studio so you can continue tinkering on your own and integrate into your own applications.

A few months ago, the team came on the .NET AI Community Standup to showcase the app. Since then, it's only kept improving and introducing new scenarios.

You can check out the recording from that stream here.

https://huggingface.co/learn/agents-course/unit0/introduction

This free course will take you on a journey, from beginner to expert, in understanding, using and building AI agents.

At the end of this course you’ll understand how Agents work and how to build your own Agents using the latest libraries and tools.

https://andysblog.uk/why-blog-if-nobody-reads-it/

There is a hidden value in blogging... You do it not for the applause but because it needs doing.

You write because you think, because you observe, because you need to put it somewhere.

If someone reads it? Bonus. If not? The work still got done.

💯💯💯

I agree with many of the points in Andy's post.

I don't have analytics on my website, so I couldn't tell you who or how many people are visiting it.

And that's okay.

When someone links to content they found on my website and tags me or sends me an e-mail letting me know something on my website is broken is when I know there's at least one person.

Many of the content on here serves as a reminder of how I've solved problems for myself. The microposts and responses are observations that capture a moment in time. If the messages reach someone on the other end and either solve their problem or resonate with them, I'm happy that happens, but I see that as a bonus.

https://ericmigi.com/blog/why-were-bringing-pebble-back

TL;DR We’re making a new Pebble-style smartwatch.

This is amazing news! By the time I decided to get a Pebble, they'd already been sold to Google.

I agree with Eric's points here:

I've tried every single smart watch out there, but none do it for me. No one makes a smartwatch with the core set of features I want:

- Always-on e-paper screen (it’s reflective rather than emissive. Sunlight readable. Glanceable. Not distracting to others like a bright wrist)

- Long battery life (one less thing to charge. It’s annoying to need extra cables when traveling) Simple and beautiful user experience around a core set of features I use regularly (telling time, notifications, music control, alarms, weather, calendar, sleep/step tracking)

- Buttons! (to play/pause/skip music on my phone without looking at the screen)

- Hackable (apparently you can’t even write your own watchfaces for Apple Watch? That is wild. There were >16k watchfaces on the Pebble appstore!)

Garmin is the closest I've come to it at least on the battery life and buttons front. Pebble was one-of-a-kind.

I already signed up to stay up to date with the project and plan on being a day one customer. You can too at repebble.com.

P.S. While not surprised, I didn't know Eric was behind another amazing project I'm a fan of, Beeper.

https://xuanwo.io/links/2025/01/link-blog/

Great to see more people sharing knowledge and linking to others from their own websites / platforms.

I decided to follow simon's approach to creating a link blog, where I can share interesting links I find on the internet along with my own comments and thoughts about them.

...this is an excellent way to share knowledge while also keeping a personal record. It’s much better than simply saving something to Readwise to read later and leaving a few highlights or dull comments like "interesting."

😂😂😂

This website is amazing.

We already finalized our OKRs for H1 but if you throw it in the backlog maybe we can get it prioritized in H2 planning

This could be part of a larger initiative, but let’s hold off for now

We should validate that with users first

https://newsletter.languagemodels.co/p/the-illustrated-deepseek-r1

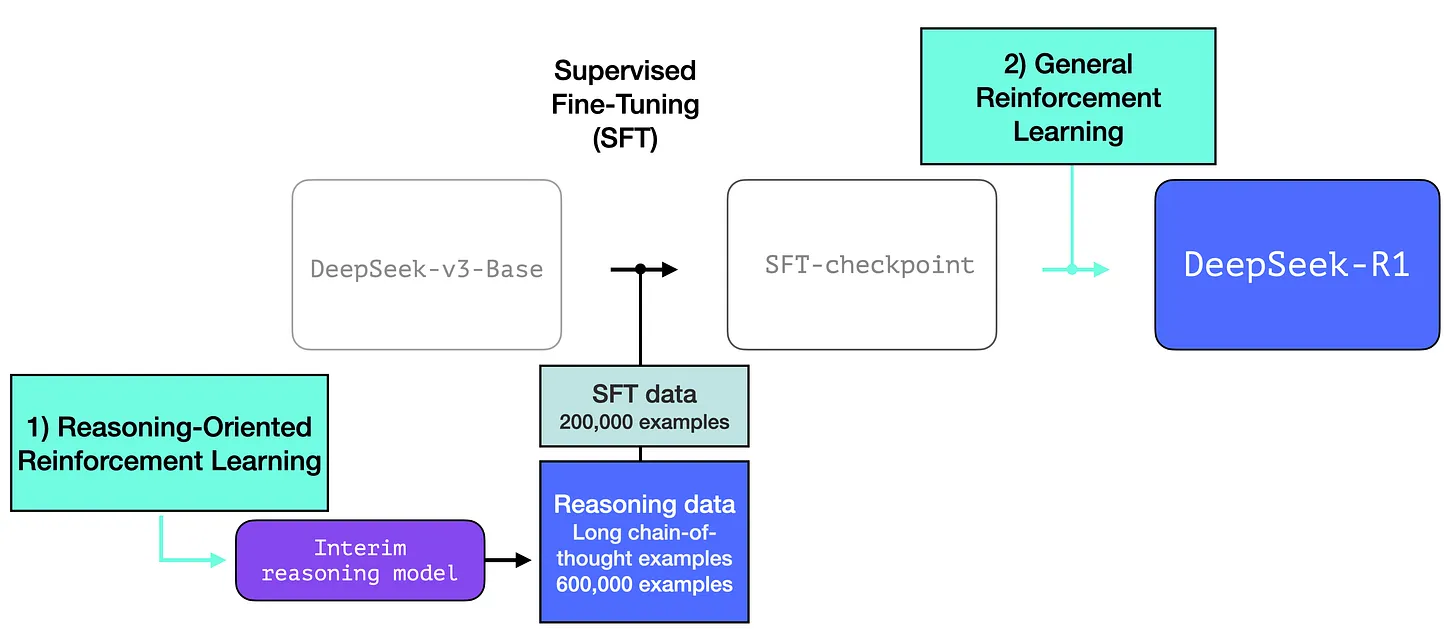

Just like most existing LLMs, DeepSeek-R1 generates one token at a time, except it excels at solving math and reasoning problems because it is able to spend more time processing a problem through the process of generating thinking tokens that explain its chain of thought.

https://openai.com/index/introducing-deep-research/

An agent that uses reasoning to synthesize large amounts of online information and complete multi-step research tasks for you.

Powered by a version of the upcoming OpenAI o3 model that’s optimized for web browsing and data analysis, it leverages reasoning to search, interpret, and analyze massive amounts of text, images, and PDFs on the internet, pivoting as needed in reaction to information it encounters.

How it works

Deep research was trained using end-to-end reinforcement learning on hard browsing and reasoning tasks across a range of domains. Through that training, it learned to plan and execute a multi-step trajectory to find the data it needs, backtracking and reacting to real-time information where necessary. The model is also able to browse over user uploaded files, plot and iterate on graphs using the python tool, embed both generated graphs and images from websites in its responses, and cite specific sentences or passages from its sources. As a result of this training, it reaches new highs on a number of public evaluations focused on real-world problems.

Part 8 of "A guide to implement ActivityPub in a static site (or any website)" by maho.dev recently dropped.

This part goes over how to add replies and comments to your static site.

https://www.wisn.com/article/bucees-plans-first-wisconsin-travel-center-in-oak-creek/63527062

Buc-ee's, the popular Texas-based travel center chain, is set to open its first Wisconsin location in Oak Creek, city officials announced.

Time to get my banana pudding fix.

https://manuelmoreale.com/short-long-form

This is something I've been dealing with on my site. I separate my long-form from short-form posts. However, I don't have a hard limit that clearly defines which is which. An initial reason for the separation was, I wanted to have a feed that was easy to scroll through. That was harder to do if I was rendering an entire long-form post as part of the feed. In the end though, most of my posts are all markdown files and their main distinction is how they are rendered.

As I work on my website redesign, I want to change how I think about posts. I want to separate the post content from the rendering. A post is a post regardless of content and length. Regardless of whether it's a response, image, video, note, or anything in between, I don't want to constrain the content of my post. I can choose to render posts differently depending on whether it's being viewed in a feed or individual post page. The content driving those different views though shouldn't change.

https://lepisma.xyz/2025/01/17/emacs-on-device-ml/index.html

This is a cool walk-through of how to add semantic search to Emacs using local ML models.

With ONNX.el you can run any ML model inside Emacs, including ones for images, audios, etc. In case you are running embedding models, combine this with sem.el to perform semantic searches. Depending on your input modality, you might have to figure out preprocessing. For text, tokenizers.el should cover all of what you need in modern NLP for preprocessing, though you will have to do something on your own for anything non-text or multimodal.

By default sem runs a local all-MiniLM-L6-v2 model for text embeddings. This model is small and runs fast on CPU. Additionally, we load an O2 optimized variant which helps further. The vectors are stored in a lancedb database on file system which runs without a separate process. I was originally writing the vector db core myself to learn more of Rust, but then stopped doing that since lancedb gives all that I needed, out of the box.

https://www.mollywhite.net/micro/entry/202501190959

...you needed to move anything you care about online to a space you control. Digital sovereignty is more important than ever.

💯💯💯💯💯

Best time was yesterday. Next best time is now.

https://www.figma.com/blog/making-space-for-a-handmade-web/

Love this article.

Before the web was more commercialized, it was personal...A website was a way to represent yourself and connect to others, a space to tend and call your very own.

The imperfect, handmade character of websites has been sanded away in favor of efficiency, and social media platforms have risen in the stead of amateur sites. Many of us conform to these containers and retreat from expressing our authentic selves publicly...

If the idea of a more handcrafted internet resonates with you, the best way to be part of the movement is simply to make your own website. This may seem intimidating if we expect webpages to be a holistic reflection of ourselves, like a resume, portfolio, or blog. We limit the web as a medium when we expect that a website must fulfill a host of needs, or serve any function at all. A website doesn’t need to be anything but your own.

A more personal web becomes possible once we turn away from our preconceived notions of what a website must be. Let’s use our tools to continuously push at the boundaries of the web. Doing so will create a more creative, expansive environment that allows us to reclaim our agency and reinvigorate our collective understanding of software as an artisanal craft. Instead of concentrating on a few, monolithic sites, what if we visited many, more personalized sites? We should explore the far reaches of the web and invite our friends to join in: After all, we make the internet, and only we can build the web we want.

https://apnews.com/article/david-lynch-dies-9107f3ce0b4dd49dbe3dc2ae3c09ed59

David Lynch, the filmmaker celebrated for his uniquely dark and dreamlike vision in such movies as “Blue Velvet” and “Mulholland Drive” and the TV series “Twin Peaks,” has died just days before his 79th birthday.

I didn't understand all of his work, but I appreciate his originality and overall aesthetic.

https://arxiv.org/abs/2403.17392

I'm going to tell future generations, this is how we built multi-agent systems back in my day.

Cyborg insects refer to hybrid robots that integrate living insects with miniature electronic controllers to enable robotic-like programmable control. These creatures exhibit advantages over conventional robots in adaption to complex terrain and sustained energy efficiency. Nevertheless, there is a lack of literature on the control of multi-cyborg systems. This research gap is due to the difficulty in coordinating the movements of a cyborg system under the presence of insects' inherent individual variability in their reactions to control input. Regarding this issue, we propose a swarm navigation algorithm and verify it under experiments. This research advances swarm robotics by integrating biological organisms with control theory to develop intelligent autonomous systems for real-world applications.

https://news.itsfoss.com/ai-subtitles-vlc/

Shown off at CES 2025 is VLC's upcoming automatic subtitling feature, which is local in nature, running entirely on a user's machine without needing to connect to some distant server.

According to Jean-Baptiste Kempf, the creator and lead developer of VLC, this new feature is powered by open source AI models, which carry out subtitle generation tasks in two stages.

One aspect is automatic generation of subtitles from the video, and the other is translation of those subtitles into over 100 languages.

Unfortunately, there's no word on when this feature will be arriving on VLC.

https://josebriones.substack.com/p/the-future-of-calm-technology-with

Interesting interview and introduction to Calm Technology and the Calm Tech Institute.

https://ellanew.com/ptpl/139-2025-01-13-be-the-landlord-of-your-notes

When you choose to keep your notes in locally stored plain text files, you’re choosing to be your own landlord. Keeping notes in a proprietary app is the same as renting: the land you’re building on belongs to someone else, with no guarantee of continuing access.

This is so true for many other areas of our digital lives as well.

https://nesslabs.com/a-year-of-curiosity

When we encounter something new and interesting, our brains release dopamine – the same neurotransmitter associated with reward and pleasure. This creates a simple pattern: the more we learn, the more we want to learn.

This drive to explore and understand isn’t just nice to have – it’s essential to who we are as humans.

There’s no single “right” way to be curious. Learning practical skills like coding or cooking, diving into topics like history or science, joining groups of people who share your interests… These are all great ways to inject more curiosity into your life.

Designing a year of curiosity

- Monthly: Design one tiny experiment at the beginning of each month This could be as simple as exploring a topic you know nothing about, trying a new hobby, or doing something that pushes you out of your comfort zone...

- Weekly: Every week, take 10 to 15 minutes to conduct a weekly review. What went well this week? What didn’t go as planned? What will you focus on next week? This could mean doubling down on what worked or tweaking something that didn’t...

- Daily: Create at least one moment of curiosity in your day, no matter how small. Experiment with a new recipe or tool, have one meaningful conversation, try a journaling prompt, or take a different route to work. Even one minute of curiosity a day can add up to a much richer life.

https://huyenchip.com/2025/01/07/agents.html

The unprecedented capabilities of foundation models have opened the door to agentic applications that were previously unimaginable. These new capabilities make it finally possible to develop autonomous, intelligent agents to act as our assistants, coworkers, and coaches. They can help us create a website, gather data, plan a trip, do market research, manage a customer account, automate data entry, prepare us for interviews, interview our candidates, negotiate a deal, etc. The possibilities seem endless, and the potential economic value of these agents is enormous.

This section will start with an overview of agents and then continue with two aspects that determine the capabilities of an agent: tools and planning. Agents, with their new modes of operations, have new modes of failure. This section will end with a discussion on how to evaluate agents to catch these failures.

https://www.kaggle.com/whitepaper-agents

Humans are fantastic at messy pattern recognition tasks. However, they often rely on tools - like books, Google Search, or a calculator - to supplement their prior knowledge before arriving at a conclusion. Just like humans, Generative AI models can be trained to use tools to access real-time information or suggest a real-world action. For example, a model can leverage a database retrieval tool to access specific information, like a customer's purchase history, so it can generate tailored shopping recommendations. Alternatively, based on a user's query, a model can make various API calls to send an email response to a colleague or complete a financial transaction on your behalf. To do so, the model must not only have access to a set of external tools, it needs the ability to plan and execute any task in a self- directed fashion. This combination of reasoning, logic, and access to external information that are all connected to a Generative AI model invokes the concept of an agent, or a program that extends beyond the standalone capabilities of a Generative AI model. This whitepaper dives into all these and associated aspects in more detail.

https://blog.archive.org/2025/01/01/welcome-to-the-public-domain-in-2025/

On January 1, 2025, we celebrate published works from 1929 and published sound recordings from 1924 entering the public domain! The passage of these works into the public domain celebrates our shared cultural heritage. The ability to breathe new life into long forgotten works, remix the most popular and enduring works of the time, and to better circulate the oddities we find in thrift stores, attics, and on random pockets of the internet are now freely available for us all.

While not at the same blockbuster level as 2024 with Steamboat Willie’s passage into the public domain, works from 1929 still inhabit strong cultural significance today. The works of 1929 continue to capture the Lost Generation’s voice, the rise of sound film, and the emerging modern moment of the 1920s.

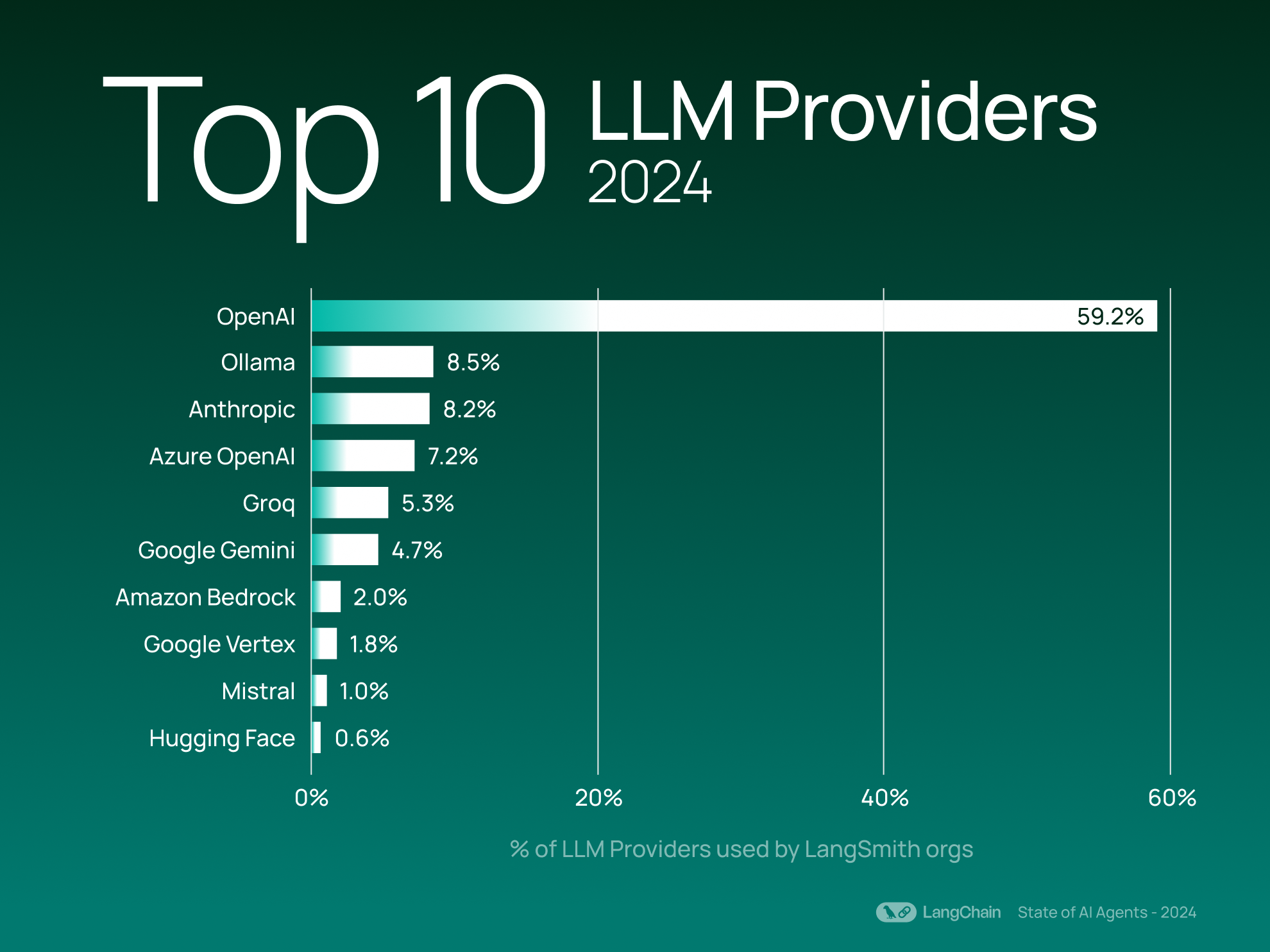

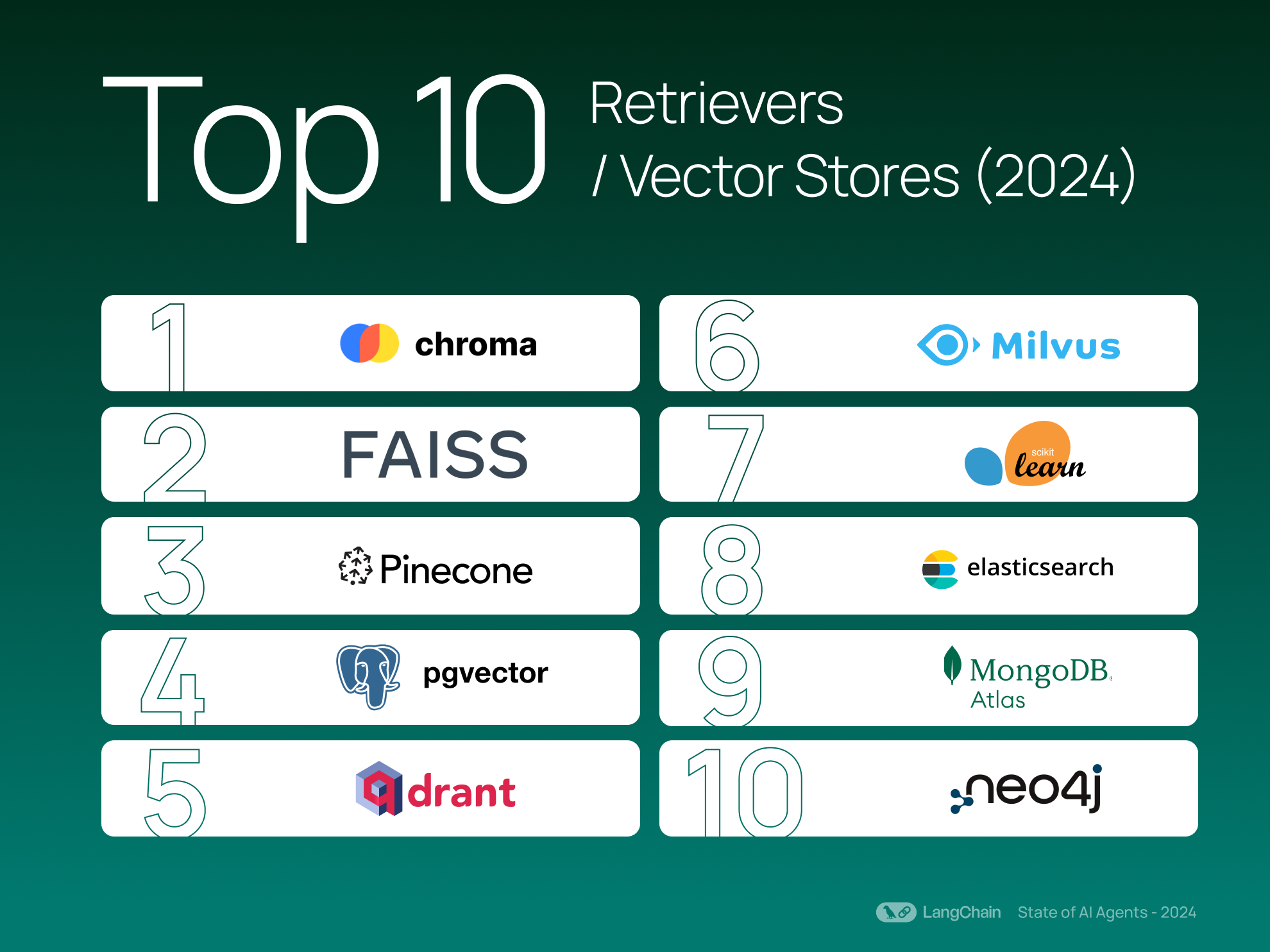

https://blog.langchain.dev/langchain-state-of-ai-2024/

In 2024, developers leaned into complexity with multi-step agents, sharpened efficiency by doing more with fewer LLM calls, and added quality checks to their apps using methods of feedback and evaluation.

https://github.com/microsoft/markitdown

MarkItDown is a utility for converting various files to Markdown (e.g., for indexing, text analysis, etc). It supports:

- PowerPoint

- Word

- Excel

- Images (EXIF metadata and OCR)

- Audio (EXIF metadata and speech transcription)

- HTML

- Text-based formats (CSV, JSON, XML)

- ZIP files (iterates over contents)

https://github.com/muni-town/agentic-fediverse

The "Agentic Fediverse" is the idea of a new kind of network federation, a complementary iteration on the concept of federation currently established with ActivityPub. It's an experiment and something that we in Muni Town are still trying to define more concretely.

Tenets

We are still working on defining the core tenets of the agentic fediverse, but here is what we have so far.

An agentic fediverse is...

- A fediverse of agents; agent-centric, as opposed to server-centric.

- Local-first; solve local problems for local people.

- Accessible by default; inaccessibility is a bug.

- Systematically consensual; architected on the basis of informed consent.

https://www.anew.social/hello-social-web/

We're A New Social, a new non-profit organization focused on building cross-protocol tools and services for the open social web.

Our mission is to liberate people's networks from their platforms, enabling The Last Network Effect and leveling the playing field across the open social web.

The first project we'll take on to accomplish this mission is Bridgy Fed, a service that enables users of ActivityPub-based platforms like Mastodon, ATProto-based platforms like Bluesky, and websites to interact and engage across ecosystems.

https://engineering.tumblr.com/post/722102563011493888/streambuilder-our-open-source-framework-for

Interesting post. Whether building your feeds or plugging into existing platforms like Bluesky, this framework could serve as a good starting point.

Today, we’re abnormally jazzed to announce that we’re open-sourcing the custom framework we built to power your dashboard on Tumblr. We call it StreamBuilder, and we’ve been using it for many years.

StreamBuilder has a lot going on. The primary architecture centers around “streams” of content: whether posts from a blog, a list of blogs you’re following, posts using a specific tag, or posts relating to a search. These are separate kinds of streams, which can be mixed together, filtered based on certain criteria, ranked for relevancy or engagement likelihood, and more.

So, what’s included in the box?

- The full framework library of code that we use today, on Tumblr, to power almost every feed of content you see on the platform.

- A YAML syntax for composing streams of content, and how to filter, inject, and rank them.